Current Projects

Coresense

CORESENSE is a Horizon Europe funded project that aims to develop a theory and a derived cognitive architecture for understanding in autonomous robots. The project will provide a solution to the challenges of reliability, resilience, and trust in autonomous robots by creating a theory of understanding and awareness and reusable software assets. The project will demonstrate its capability in augmenting the resilience of drone teams, the flexibility of manufacturing robots, and the human alignment of social robots.

The goal is to improve the capability of autonomous robots to understand their open environments and complex missions, leading to improved flexibility, resilience, and explainability.

EMOROBCARE

The EMOROBCARE project is a multidisciplinary initiative aimed at designing and validating a low-cost, expressive social robot to assist therapists working with children with ASD Level 2. The project emphasizes affordability, modularity, and open-source development, leveraging technologies such as ROS2, Jetson-based hardware, and lightweight speech and vision models. Its primary goal is to evaluate the feasibility and therapeutic value of the robot in real-world therapy sessions

Past Projects

SAFE-LY

The SAFE-LY project introduces an innovative assistive robot based on the mobile manipulator TIAGo developed by PAL Robotics, to enhance patient safety by proactively identifying and mitigating hazardous and dangerous situations, such as fall detection or misplaced elements in the space. According to the medical staff, a delayed response to these situations substantially hinders the recovery process for elderly patients.

From a methodology perspective, the aim in SAFE-LY is to adopt an alternative methodology, where the users’ requirements actually result from a first phase of use of a simplified version of the potential solution. Users can then discuss their needs not only with a tangible concept, but also start experiencing it in-situ, enriching their feedback with specific and valuable feedback. In this way, we can collect in-context needs that can be translated to requirements for further refinement of the initial system.

NHOA

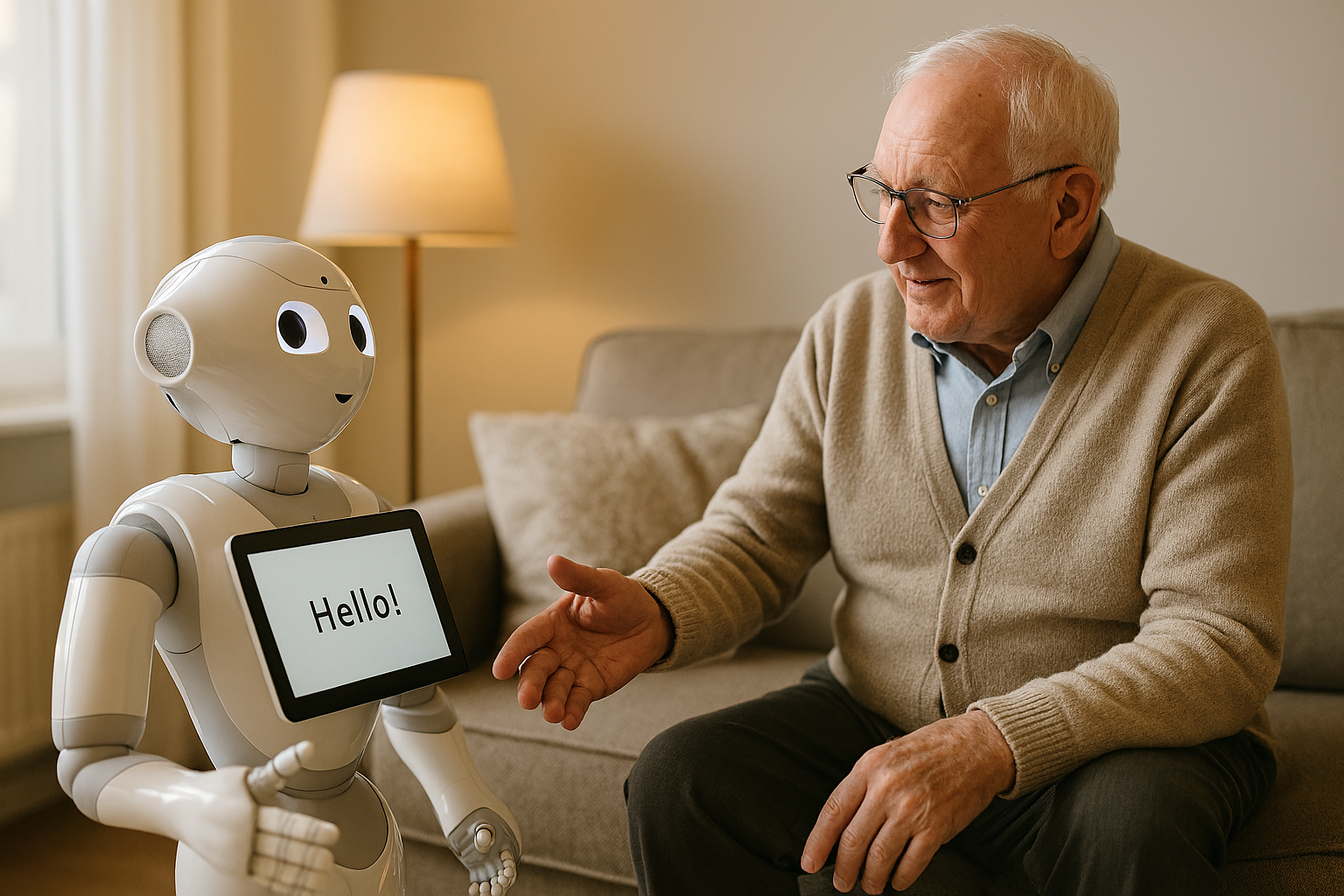

The NHOA project focuses on developing socially intelligent assistive robots for homes to support older adults living alone. The project aims to design robots that monitor, assist, and entertain users while encouraging physical and cognitive activities. It seeks to enhance well-being and comfort by integrating robust and adaptable robots that foster user acceptance and can operate autonomously in daily situations.

RAADiCal

The aim of the project is to help elderly and disabled people maintain a healthy lifestyle through intelligent robotic systems. This includes promoting social interactions, encouraging healthy eating, and assisting with physical and mental exercises. The project will develop a robotic system to support communication, monitoring, and motivation, with the assistance of a human operator when necessary.

AMIBA

To ensure the robotic system meets real-world needs, the project adopts a user-centric design approach. Initial interviews with caregivers at the Amiba Centre revealed key preferences and expectations for social robots like ARI. An observational study identified core activities where ARI could provide meaningful support: greeting users, announcing daily events, and assisting with physical therapy. Based on these insights, a second demo version was developed, featuring customizable physical exercises and enhanced interaction capabilities tailored to the center’s daily routines.

CMES

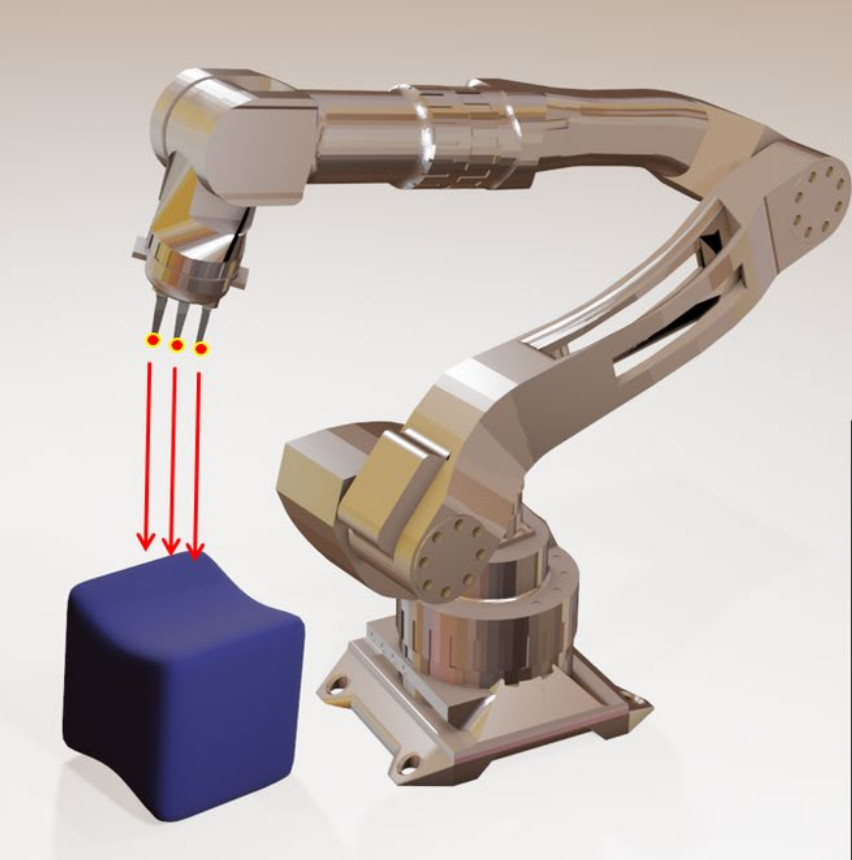

Manipulation of unknown objects is a topic of interest in the field of robotics. We expect robots to be capable of recognizing everyday objects in the environment to provide us support in daily tasks, such as tiding up our homes, handing over working materials at our offices or helping us with our grocery shopping, to name a few examples. Hence, the ability to distinguish between a variety of different objects, including a learning process of unknown objects, is a significant case of study in this regard. In this project, we study to what extent cross-modal perception, connecting the senses of sight and touch, can substantially benefit a robots’ cognitive system ability to seamlessly adapt to changes in the environment, increasing their autonomy and understanding of the world through multimodal learning.

ROBOT-TEA

This project aims to define a meaningful set of activities that a robot can engage in with children with autism. Using a participatory design approach, researchers observe interactions between children and their teachers to gain insights into how the robot should behave and what types of activities are most beneficial. The resulting prototype will be validated in real-world sessions, allowing researchers to assess its effectiveness and identify areas for improvement and refinement. This iterative process ensures the system is both responsive to children's needs and adaptable to diverse educational environments.

PEPPER-HEALTH

This project investigates how the Pepper robot can support elderly individuals in home healthcare settings. The first phase focuses on technology evaluation and use case identification in collaboration with hospitals and care centers. In the second phase, insights from the initial studies will inform the design and implementation of a functional prototype, which will serve as the main output of the project. The goal is to assess Pepper’s potential to enhance independent living, well-being, and care coordination for aging populations.

MESOI

In the MESOI project, we want to focus on the last two problems. We aim at developing a practical and reliable automatic method for the detection of the most common plant diseases that can affect a typical cultivation. This solution focuses on an autonomous analysis machine-vision method capable of detecting evolution stages of plants (presence of fruit) and presence of plant diseases (e.g. aphids). The proposed solution can allow farmers to monitor the wealth of their crop and alert them when a plant disease is present, pinpointing to where exactly the disease has spread reporting as well the size of the infected area. The proposed system consists of a lightweight rover equipped with a machine learning algorithm constantly processing images of the crop.

ALIZ-E

The ALIZ-E project set out in 2010 to build the artificial intelligence (AI) for small social robots and to study how young people would respond to these robots. At the time we knew how to build robots that would interact with people for several minutes, but we did not know how to build robots that remained engaging for a longer period of time, such as hours or perhaps even days. Long-term human-robot interaction has tremendous potential, as the robots can then be used to bond with people, which can in turn be used to provide support and education. As an application domain, the project focused on children with diabetes, whom the robots help by offering training and entertainment.

CHRIS

The CHRIS project focuses on advancing safe Human-Robot Interaction (HRI) by investigating how humans engage in cooperative actions—both cognitively and physically—across representative application domains. These insights are then translated into robotic behaviors and embedded into robotic platforms. A key aspect of the project is the comprehensive assessment of safety, encompassing physical, behavioral, and cognitive dimensions, with the ultimate goal of developing robust, human-aware robotic systems that can interact safely and effectively in real-world environments.

MID-CBR

An Integrative Framework for Developing Case-based Systems. One of the areas of research focuses on the use of CBR to improve the navigation of an autonomous robot in unknown semistructured environments. A first step is to identify problematic situations (such as dead ends or obstacle layouts that the robot is not able to avoid) and take the proper actions in order to avoid them. We integrate a CBR agent into an existing multiagent navigation system in order to evaluate the performance of the CBR system.